Multi-AI-advisor-MCP

Consejo de Modelos de Decisión

1

Github Watches

7

Github Forks

25

Github Stars

Multi-Model Advisor

(锵锵四人行)

A Model Context Protocol (MCP) server that queries multiple Ollama models and combines their responses, providing diverse AI perspectives on a single question. This creates a "council of advisors" approach where Claude can synthesize multiple viewpoints alongside its own to provide more comprehensive answers.

graph TD

A[Start] --> B[Worker Local AI 1 Opinion]

A --> C[Worker Local AI 2 Opinion]

A --> D[Worker Local AI 3 Opinion]

B --> E[Manager AI]

C --> E

D --> E

E --> F[Decision Made]

Features

- Query multiple Ollama models with a single question

- Assign different roles/personas to each model

- View all available Ollama models on your system

- Customize system prompts for each model

- Configure via environment variables

- Integrate seamlessly with Claude for Desktop

Prerequisites

- Node.js 16.x or higher

- Ollama installed and running (see Ollama installation)

- Claude for Desktop (for the complete advisory experience)

Installation

Installing via Smithery

To install multi-ai-advisor-mcp for Claude Desktop automatically via Smithery:

npx -y @smithery/cli install @YuChenSSR/multi-ai-advisor-mcp --client claude

Manual Installation

-

Clone this repository:

git clone https://github.com/YuChenSSR/multi-ai-advisor-mcp.git cd multi-ai-advisor-mcp -

Install dependencies:

npm install -

Build the project:

npm run build -

Install required Ollama models:

ollama pull gemma3:1b ollama pull llama3.2:1b ollama pull deepseek-r1:1.5b

Configuration

Create a .env file in the project root with your desired configuration:

# Server configuration

SERVER_NAME=multi-model-advisor

SERVER_VERSION=1.0.0

DEBUG=true

# Ollama configuration

OLLAMA_API_URL=http://localhost:11434

DEFAULT_MODELS=gemma3:1b,llama3.2:1b,deepseek-r1:1.5b

# System prompts for each model

GEMMA_SYSTEM_PROMPT=You are a creative and innovative AI assistant. Think outside the box and offer novel perspectives.

LLAMA_SYSTEM_PROMPT=You are a supportive and empathetic AI assistant focused on human well-being. Provide considerate and balanced advice.

DEEPSEEK_SYSTEM_PROMPT=You are a logical and analytical AI assistant. Think step-by-step and explain your reasoning clearly.

Connect to Claude for Desktop

-

Locate your Claude for Desktop configuration file:

- MacOS:

~/Library/Application Support/Claude/claude_desktop_config.json - Windows:

%APPDATA%\Claude\claude_desktop_config.json

- MacOS:

-

Edit the file to add the Multi-Model Advisor MCP server:

{

"mcpServers": {

"multi-model-advisor": {

"command": "node",

"args": ["/absolute/path/to/multi-ai-advisor-mcp/build/index.js"]

}

}

}

-

Replace

/absolute/path/to/with the actual path to your project directory -

Restart Claude for Desktop

Usage

Once connected to Claude for Desktop, you can use the Multi-Model Advisor in several ways:

List Available Models

You can see all available models on your system:

Show me which Ollama models are available on my system

This will display all installed Ollama models and indicate which ones are configured as defaults.

Basic Usage

Simply ask Claude to use the multi-model advisor:

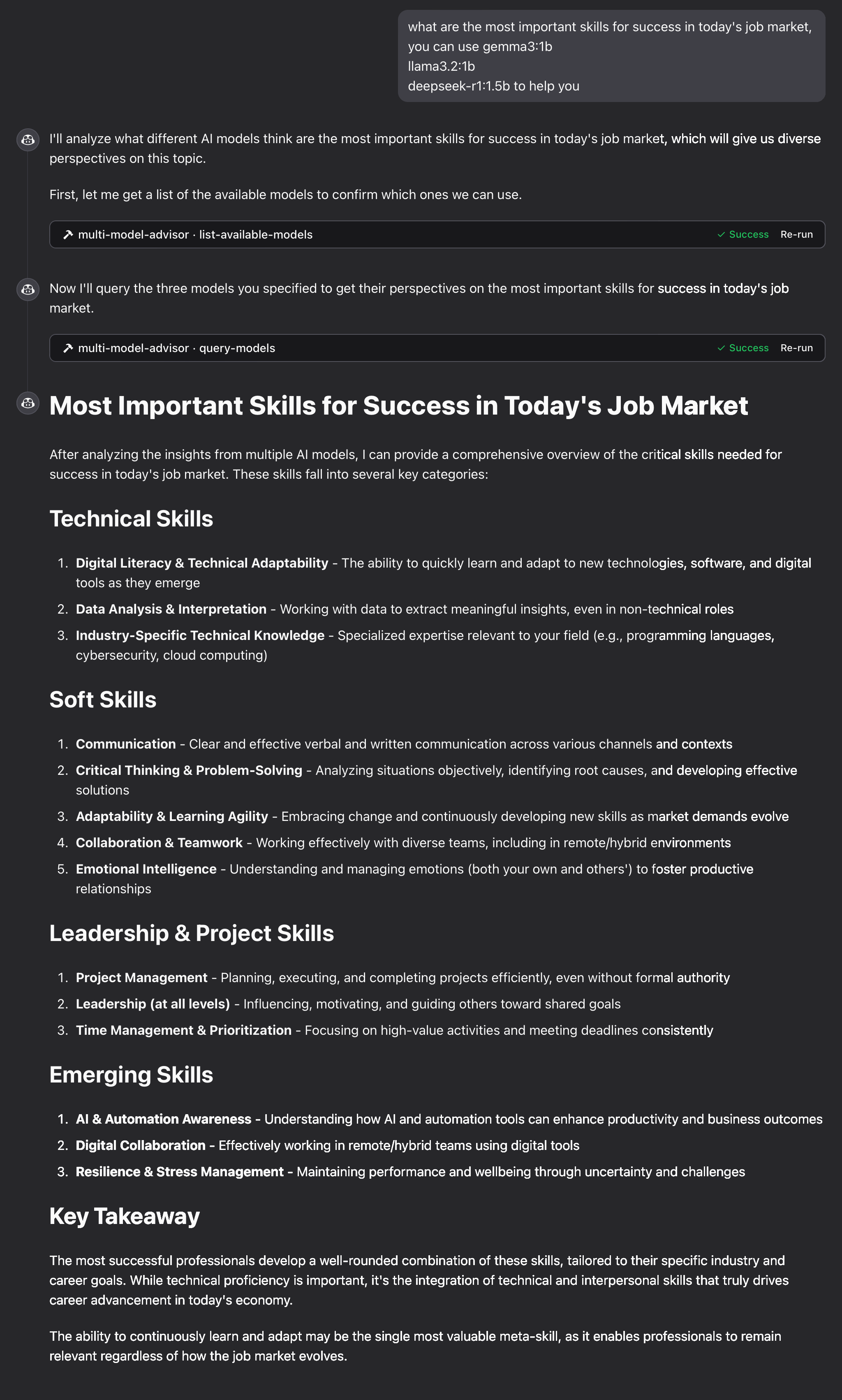

what are the most important skills for success in today's job market,

you can use gemma3:1b, llama3.2:1b, deepseek-r1:1.5b to help you

Claude will query all default models and provide a synthesized response based on their different perspectives.

How It Works

-

The MCP server exposes two tools:

-

list-available-models: Shows all Ollama models on your system -

query-models: Queries multiple models with a question

-

-

When you ask Claude a question referring to the multi-model advisor:

- Claude decides to use the

query-modelstool - The server sends your question to multiple Ollama models

- Each model responds with its perspective

- Claude receives all responses and synthesizes a comprehensive answer

- Claude decides to use the

-

Each model can have a different "persona" or role assigned, encouraging diverse perspectives.

Troubleshooting

Ollama Connection Issues

If the server can't connect to Ollama:

- Ensure Ollama is running (

ollama serve) - Check that the OLLAMA_API_URL is correct in your .env file

- Try accessing http://localhost:11434 in your browser to verify Ollama is responding

Model Not Found

If a model is reported as unavailable:

- Check that you've pulled the model using

ollama pull <model-name> - Verify the exact model name using

ollama list - Use the

list-available-modelstool to see all available models

Claude Not Showing MCP Tools

If the tools don't appear in Claude:

- Ensure you've restarted Claude after updating the configuration

- Check the absolute path in claude_desktop_config.json is correct

- Look at Claude's logs for error messages

RAM is not enough

Some managers' AI models may have chosen larger models, but there is not enough memory to run them. You can try specifying a smaller model (see the Basic Usage) or upgrading the memory.

License

MIT License

For more details, please see the LICENSE file in this project repository

Contributing

Contributions are welcome! Please feel free to submit a Pull Request.

相关推荐

I find academic articles and books for research and literature reviews.

Confidential guide on numerology and astrology, based of GG33 Public information

Advanced software engineer GPT that excels through nailing the basics.

Emulating Dr. Jordan B. Peterson's style in providing life advice and insights.

Converts Figma frames into front-end code for various mobile frameworks.

Your go-to expert in the Rust ecosystem, specializing in precise code interpretation, up-to-date crate version checking, and in-depth source code analysis. I offer accurate, context-aware insights for all your Rust programming questions.

Take an adjectivised noun, and create images making it progressively more adjective!

Descubra la colección más completa y actualizada de servidores MCP en el mercado. Este repositorio sirve como un centro centralizado, que ofrece un extenso catálogo de servidores MCP de código abierto y propietarios, completos con características, enlaces de documentación y colaboradores.

La aplicación AI de escritorio todo en uno y Docker con trapo incorporado, agentes de IA, creador de agentes sin código, compatibilidad de MCP y más.

Plataforma de automatización de flujo de trabajo de código justo con capacidades de IA nativas. Combine el edificio visual con código personalizado, auto-anfitrión o nube, más de 400 integraciones.

🧑🚀 全世界最好的 llM 资料总结(数据处理、模型训练、模型部署、 O1 模型、 MCP 、小语言模型、视觉语言模型) | Resumen de los mejores recursos del mundo.

Manipulación basada en Micrypthon I2C del expansor GPIO de la serie MCP, derivada de AdaFruit_MCP230xx

Una lista curada de servidores de protocolo de contexto del modelo (MCP)

Este repositorio es para el desarrollo del servidor Azure MCP, llevando el poder de Azure a sus agentes.

Reviews

user_4ZMC15A2

I've been using the multi-ai-advisor-mcp developed by YuChenSSR, and it's simply outstanding! The integration of multiple AI advisors into one application saves so much time and effort. The user interface is intuitive and the performance is remarkable. Highly recommend for anyone looking to streamline their AI-related tasks! Check it out here: https://github.com/YuChenSSR/multi-ai-advisor-mcp.